Vision

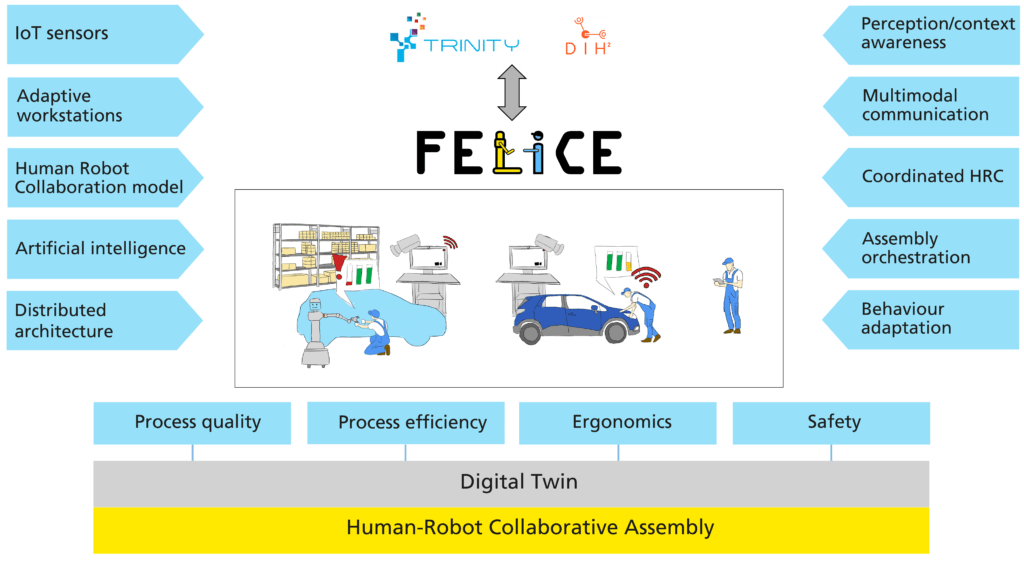

FELICE addresses one of the greatest challenges in robotics, i.e. that of coordinated interaction and combination of human and robot skills. The proposal targets the application priority area of agile production and aspires to design the next generation assembly processes required to effectively address current and pressing needs in manufacturing. To this end, it envisages adaptive workspaces and a cognitive robot collaborating with workers in assembly lines. FELICE unites multidisciplinary research in collaborative robotics, AI, computer vision, IoT, machine learning, data analytics, cyber-physical systems, process optimization and ergonomics to deliver a modular platform that integrates and harmonizes an array of autonomous and cognitive technologies in order to increase the agility and productivity of a manual assembly production system, ensure the safety and improve the physical and mental well-being of factory workers. The key to achieve these goals is to develop technologies that will combine the accuracy and endurance of robots with the cognitive ability and flexibility of humans. Being inherently more adaptive and configurable, such technologies will support future manufacturing assembly floors to become agile, allowing them to respond in a timely manner to customer needs and market changes.

Related developments will proceed along the following directions:

- Implementing perception and cognition capabilities based on many heterogeneous sensors in the shop floor, which will allow the system to build context-awareness.

- Advancing human-robot collaboration, enabling robots to operate safely and ergonomically alongside humans, sharing and reallocating tasks between them, allowing the reconfiguration of an assembly production process in an efficient and flexible manner.

- Realizing a manufacturing digital twin, i.e. a virtual representation tightly coupled with production assets and the actual assembly process, enabling the management of operating conditions, the simulation of the assembly process and the optimization of various aspects of its performance.

Objectives

- Develop context-awareness via ubiquitous sensing. Process and fuse multiple sensor streams in real-time to represent actors and objects in the assembly line. This regards implementing state of the art perception methods which: i) map the factory shop floor; ii) model and analyse the behaviours of human workers, their actions, intentions and feedback; iii) detect and localize objects of interest; iv) extract verbal commands uttered by workers.

- Ensure safety in human-robot collaborative assembly. Collaborative operation has a higher probability for safety critical situations occurring due to close proximity of workers and robots.

- Amplify fluency and ergonomics in human-robot collaborative assembly. Fluency refers to the level of coordination of a robot’s actions with a human when they perform a task side-by-side. For effective collaborative assembly, robots should perform tasks concurrently or even jointly with humans. Such collaboration should also improve the physical and cognitive ergonomics of the assembly process for the benefit of workers.

- Orchestrate the assembly process in real-time. Monitor the assembly line as a whole in order to continuously make corrective decisions related to the operation of the assembly process and the selective use of robots to enhance the performance of the composite line.

- Realize collaborative assembly lines for agile production in real production environments. Showcase the ability of project developments to easily define, adjust, deploy and operate new production processes that achieve smooth and effectual collaboration of human workforce with intelligent artificial systems that autonomously undertake supportive assembly tasks in real industrial settings.

- Maximize the project impact. Promote the project to various audiences (e.g., scientific peers, professional organizations, industry, policymakers) and the society as a whole. Transfer knowledge and innovations beyond the project and pave the road to commercialization.

Architecture

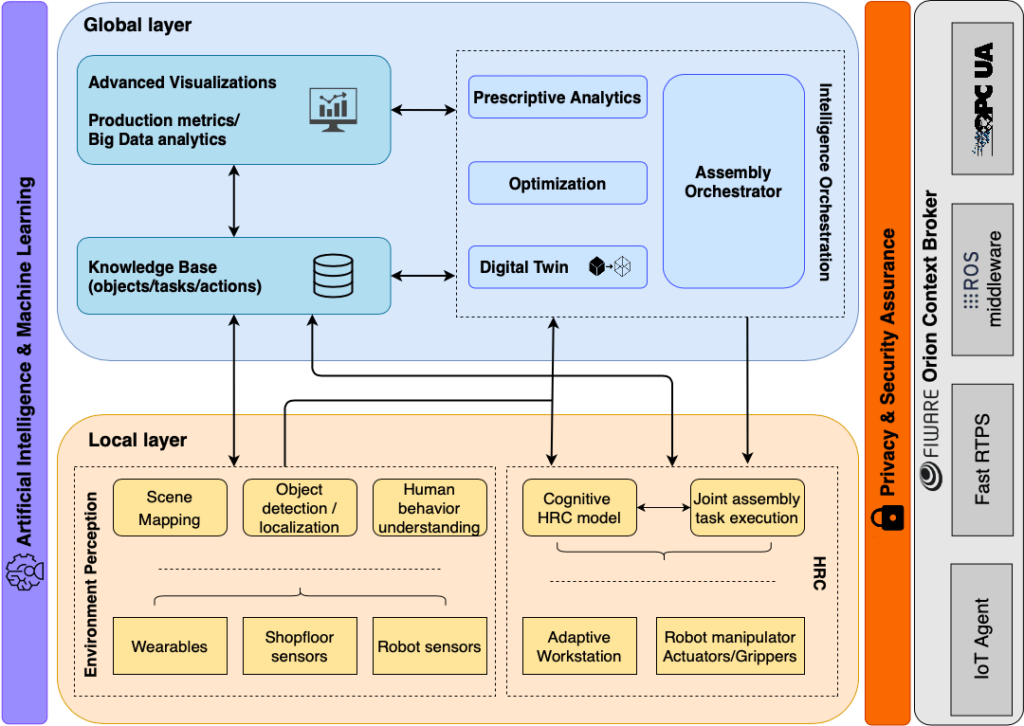

Our framework comprises of two layers:

- A local one introducing a single collaborative assembly robot that will roam the shop floor assisting workers.

- Adaptive workstations able to automatically adjust to the workers’ somatometries and providing multimodal informative guidance and notifications on assembly tasks, and a global layer which will sense and operate upon the real world via an actionable digital replica of the entire physical assembly line.

The framework is supported by the following pillared structure:

- Smart process monitoring via integration of heterogeneous sensors and devices in industrial environments.

- Collaborative robots with advanced cognitive capabilities, mobility and adaptability for joint task execution, addressing safety and fluency.

- AI system for real-time orchestration and control of adaptive assembly lines.

- Distributed architecture computing paradigm and re-usable toolkits.

- Technology validation in real industrial environments.

FELICE foresees two environments for experimentation, validation, and demonstration. The first is a small-scale prototyping environment aimed to validate technologies before they are applied in a larger setting, provided by the second, industrial environment of one of the largest automotive industries in Europe. It is the view of the consortium that this quest is timely reacting to international competition, trends, and progress, pursuing results that are visionary and far beyond the current state of the art.